The technical exploration started with photogrammetry as a mode of capturing and rendering buildings and spaces around the city. During the processing of photogrammetry scans, I discovered it created point clouds which I found visually enchanting. I have never been fond of either the hyper-realistic 3D graphics of video games and point clouds offered more texture and a more painterly rendering.Photogrammetry I then explored 3D modelling software and game engines as the final tools in the production pipeline

Photogrammetry

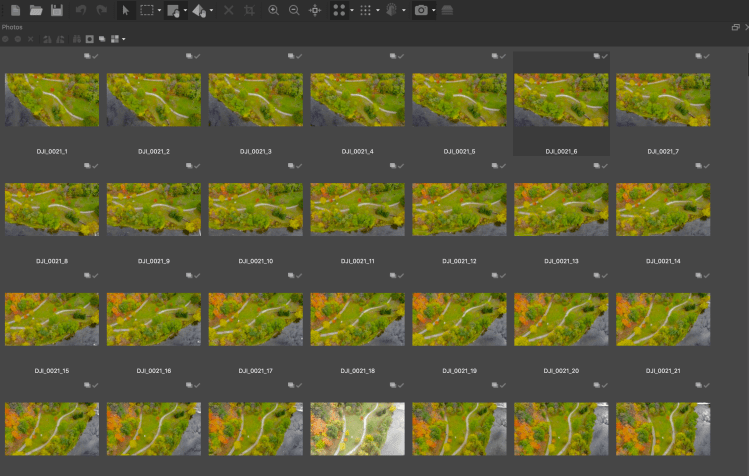

Photogrammetry is a 3D scanning technique that can produce accurate photorealistic models of an object, person, building, or location. The process involves taking numerous high-resolution photographs (in the dozens, hundreds or sometimes thousands) of the site or building from as many vantage points as possible. The photographs are then imported into a specialized software that uses the camera settings and algorithms to compare the way the item appears in different photographs and render its shape and depth.

Point clouds

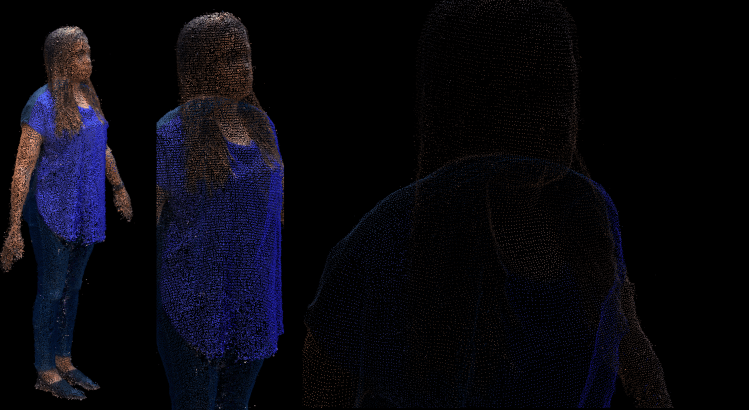

As it processes the photographs, the software outputs point clouds (a data set of points in space) as an intermediate step before the final full 3D model. Each point is a set of X, Y, Z coordinates as well as a fourth number representing its colour. The texture of the point clouds was enchanting to me. Their main characteristic is their changing nature depending on the viewer’s location long-standing. From far away, they look opaque, but they become progressively more transparent with proximity. They are the perfect metaphor for the history that haunts the city but remains invisible.

The point clouds were also the answer to my quest for a digital process that produced detailed yet imperfect textures. Small (and sometimes not so small) imperfections occur in the resulting 3D scans as digital glitches. Extraneous points can be removed, but additional ones cannot be added to repair holes. In searching for a way to get around the mainstream video game aesthetic and the cleanliness of its lines, I found the perfect imperfection of point clouds with its artefacts that remain, as a manifestation of what is lost in translation from the physical world to the virtual 3D model.

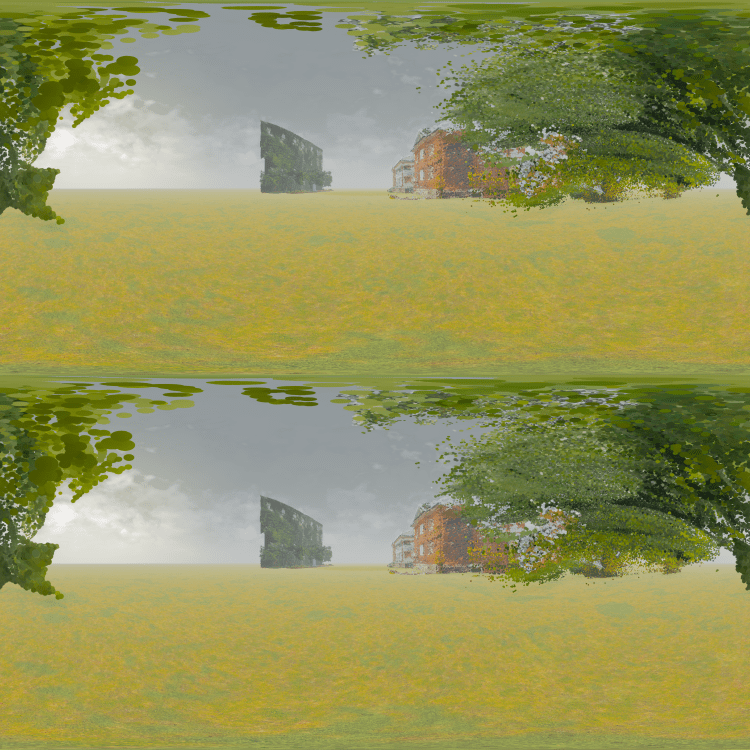

The difficulty with point clouds however is that, as data, they are displayed differently by different software as illustrated above. In both Metashape and A-frame, they are displayed as very fine points. In 3D modelling software Unity and Unreal however, the points appear chunkier. Although it is possible to get a very fine texture of points in Unreal, if they are too small, they virtually disappear. They therefore have to be displayed at a larger size that lacks the finesse and the modulation that drew me to point clouds. They do, however, display a painterly texture that distinguishes them from the flatter video game aesthetic.

3D modelling

Unreal Engine was chosen as the tool to bring all the elements together to create the virtual reality experience. It is where the photogrammetry scans rendered as point clouds were integrated to produce 3D environments in combination with directional sound, a 3D animated character and other animations. The platform enables the addition of lighting, fog and other atmospheric elements in the creation of scenes that can them be exported for display in a headset.

Character design

Because of financial and time constraints, Thérèse was created from a purchased asset and there were limitations on the customization that could be done. The 3D mesh could not be easily altered, so we had to focus on textures that could be more readily modified to point to her futurity and also changing some of the colours of her clothing to give her more presence.

Output

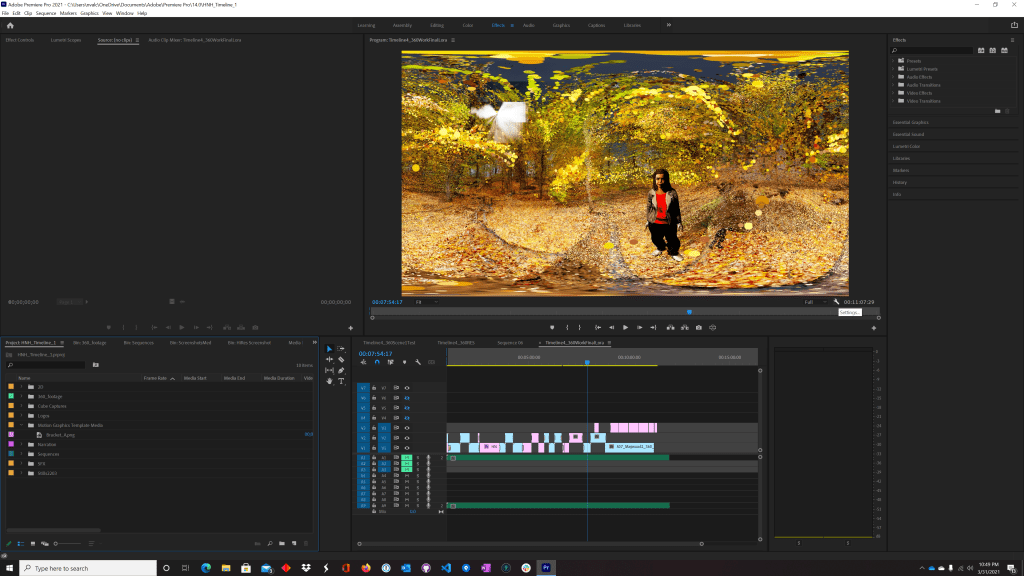

The frames rendered from Unreal Engine were then brought into Premiere Pro, edited into a 360 video sequence with mixed audio and then exported.

Technical limitations

My original desire was to have a virtual reality experience with 6 degrees of freedom (6 dof) in which participant would be able to walk and explore the space. Given issues with the tremendous computational demands of point clouds, the decision was made create a project with 3 degrees of freedom (3dof) which means that the participant can look all around them (a full 360 degrees) from a fixed position, but not independently roam. This allows for the point clouds to be pre-computed and rendered for display in a headset, rather than rendered in real time which would cause significant lag.

The full process for the output for from Unreal Engine was done frame by frame to get the best possible resolution. Again, because of the computational difficulties with point clouds, the outputs were quite time consuming, averaging at least 1 hour of render time per second of footage at 30 frames per second. Major compromises had to be made at the output stage in terms of reducing camera movements and animations to make the export feasible.

The project was rendered in two different resolutions, a monoscopic 2K version for streaming online and a 4K stereoscopic version for headset. The monoscopic version flattens the point clouds, completely obliterating their visual characteristics. Additional research needs to be done to find more tools ans workflows to work with point clouds and the challenges they present.

Produced as part of

the Digital Futures graduate program at OCAD University